Beyond the chatbot: building multi-agent systems and Agentic AI into your product strategy.

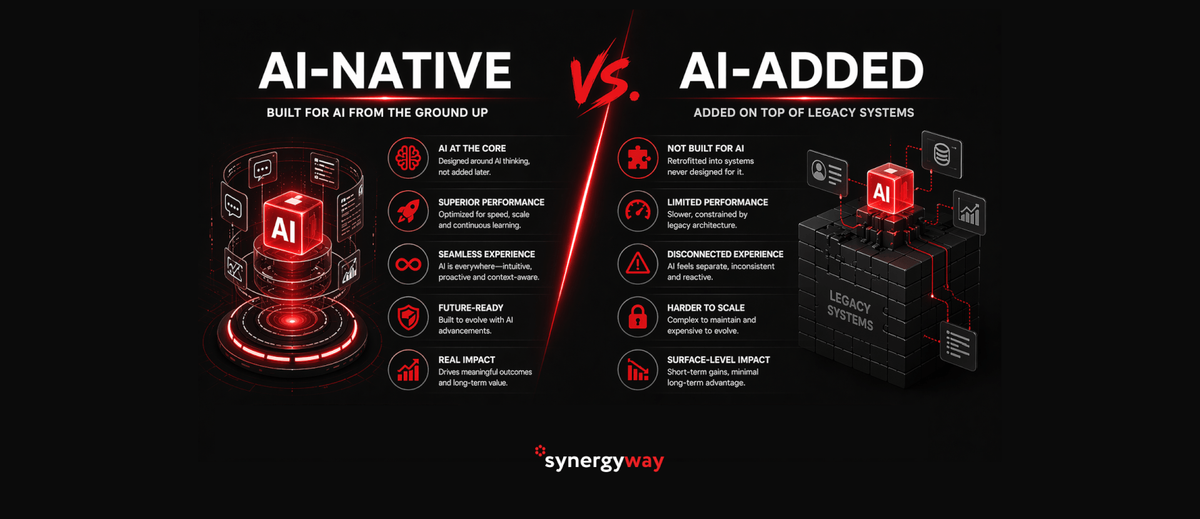

The year 2026 marks a structural reset in enterprise automation. The initial wave of “generative AI fever,” characterized by sprinkling chatbots and copilots across departments, has given way to a more disciplined, architectural era. For startups and mid-size organizations, the question is no longer whether to use AI but how to architect it: is your product merely “AI-added” or “AI-native”?

Despite the hype, the industry is currently facing an “Agentic Reality Check.” While approximately 38% of firms are actively piloting AI agents, only 11% have successfully moved these systems into full production. This gap exists because many organizations are still treating AI as a conversational layer rather than an execution layer. Moving beyond the pilot stage requires a fundamental shift toward Multiagent Systems (MAS) – coordinated networks of specialized AI that don’t just chat about work, but actually complete it.

From Copilots to Agents: The Evolution of Business Logic in 2026

In 2024 and 2025, the “copilot” was the dominant paradigm — a single assistant looping over basic tools like search and text generation. In 2026, business logic has evolved from these linear assistants to autonomous agents that can plan, act, recover from errors, and escalate complex cases to humans.

The breakthrough of 2026 is the transition from solo agents to Multiagent Systems (MAS). Think of this as the shift from a group of freelancers to a synchronized team. A single agent often bottlenecks on context limits and reasoning failures; a multi-agent architecture, however, decomposes a goal into specialist subproblems — assigning a “Planning Agent,” a “Research Agent,” and a “Quality-Checking Agent” to work in parallel. This architecture allows for:

- Parallelism: Reducing wall-clock time by running tasks like data extraction and policy review concurrently.

- Specialization: Using smaller, optimized models for specific tasks like SQL querying or code generation rather than one general model for everything.

- Governance: Separating the “Execution Agent” from a “Policy Agent” that can approve or deny actions based on company guardrails.

Domain-Specific Models vs. General LLMs: Choosing for Accuracy and Compliance

Mid-market organizations are increasingly pivoting from generic LLMs (like GPT-4 or Claude 3.5) to Domain-Specific Language Models (DSLMs) for their business-critical workflows. While generic models are impressive, they often fall short in high-stakes environments due to the “Unreliability Tax”—the cost of mitigating hallucinations and context overflow.

DSLMs offer a strategic advantage in three key areas:

- Financial Efficiency: DSLMs can provide up to 50% lower development costs and faster deployment because they require less “prompt engineering” to reach high accuracy.

- Logic Density: Using techniques like Instruction Pre-training, a specialized 500M-parameter model can match the performance of a 1B-parameter general model while using 3x less data.

- Compliance: Smaller, open-source DSLMs can be deployed on-premises, supporting sovereign AI strategies and ensuring data transparency in line with emerging regulations such as the EU AI Act.

For 2026, the benchmark for “production-ready” is no longer just a high MMLU score (which measures general knowledge). Instead, leaders look at SWE-bench Verified, which measures a model’s ability to resolve real-world software issues. In these tests, specialized models often outperform frontier giants in practical utility and factual consistency.

The ROI of Agentic AI: When to Expect Real Payback

The ROI of agentic AI is no longer theoretical. In 2026, leading organizations are seeing measurable outcomes across several verticals:

- Customer Service: Agents now handle 50–65% of inquiries without human intervention, leading to a 20–30% reduction in support operating costs.

- Finance: Financial institutions report a 20–30% reduction in fraud investigation time by using agents for automated document review.

- Sales Ops: Companies using agentic flows for lead qualification have seen 10–20% increases in average order value (AOV).

To calculate the expected payback, organizations use a standard ROI formula:

$$ROI = \frac{Total Benefits – Total Costs}{Total Costs} \times 100$$

In the supply chain sector alone, implementations ranging from $20,000 to $150,000 are delivering 20–40% cost reductions through autonomous optimization. However, Gartner warns that 40% of projects will fail by 2027 if they ignore “Inference Economics”—the skyrocketing cost of running agents that communicate 24/7.

Architecting for Scale: Vector Databases and Inference Economics

As the volume of agentic data grows, the “Inference Paradox” emerges: while the cost per token is falling (now as low as $0.075 per million tokens for budget tiers), the total volume of tokens generated is growing 1000x as agents use longer “reasoning” loops.

To manage these costs, the 2026 tech stack relies on two pillars:

1. The Vector Layer

Vector databases have become the “memory” of agentic systems, allowing them to retrieve relevant context in real-time.

- pgvector: The standard for startups already using PostgreSQL; it handles up to 50M vectors with high efficiency.

- Pinecone/Zilliz: Managed powerhouses for organizations scaling to billions of vectors who want zero operational overhead.

- Weaviate: The go-to for “Hybrid Search,” blending traditional keyword matching with semantic vector similarity for high-accuracy RAG.

2. Infrastructure Optimization

Leading firms are offloading the KV cache (the “working memory” of a model) from expensive GPU memory to SSD storage, maintaining high performance at a much lower price point. By using FP8 quantization, teams can double their throughput with less than 2% loss in quality, effectively halving their monthly GPU bill.

Case Study: How Autonomous Agents are Reshaping Logistics and Supply Chains

The most dramatic application of multi-agent systems in 2026 is found in logistics. Traditionally, supply chains have operated in “firefighting mode,” reacting to disruptions. Today, they have moved to Autonomous Orchestration.

A leading logistics provider recently deployed an agentic network where:

- A Sourcing Agent monitors geopolitical news and suppliers’ financial signals in real time.

- A Logistics Agent detects a port delay and automatically reroutes shipments to an alternative hub.

- A Commercial Agent renegotiates supplier contracts autonomously based on the adjusted delivery times.

The Results:

- 30% reduction in delivery time.

- 25% transportation cost savings through autonomous route optimization.

- 70% reduction in manual communication effort as agents handle carrier follow-ups via email and document extraction.

Architecting Your 2026 Strategy with Synergy Way

The transition from “chatting with data” to “executing with agents” is the defining challenge for today’s SMBs. Moving beyond the 11% production barrier requires more than just an API key; it requires a coordinated data stack, domain-specific tuning, and a multi-agent orchestration layer.

Don’t let your AI strategy get stuck in “pilot purgatory.” At Synergy Way, we specialize in architecting the “Intelligent Enterprise.”

Whether you need to:

- Build a Multiagent System (MAS) to automate complex back-office workflows.

- Optimize your Inference Economics to reduce your monthly AI spend by up to 60%.

- Deploy a Domain-Specific Model that ensures 95%+ accuracy in regulated fields.

Contact Synergy Way today to evaluate your agentic readiness and build a product that is truly AI-native.